Epileptic Seizure Classification with Patient-level and Video-level Contrastive Pretraining

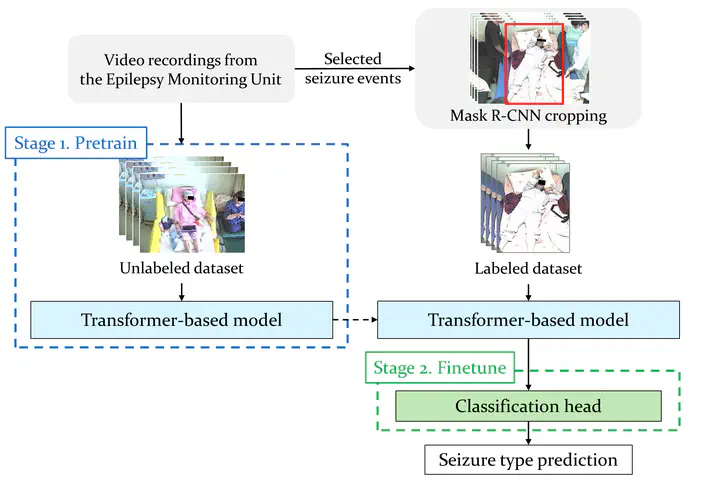

Model structure overview.

Model structure overview.Abstract

Accurate classification of epileptic seizure types through seizure semiology analysis demands significant clinical expertise. While previous studies have employed various action recognition modules, the scarcity of labeled clinical videos has hindered the deployment of larger models. In this study, we explore unlabeled data to pretrain a transformer-based model with contrastive loss, taking advantage of the information that circumvents the need for additional annotation from medical professionals. We maximize the similarity between embeddings from the same patient and video while minimizing those from different patients and videos. Subsequently, a classification head was finetuned to distinguishing temporal lobe epilepsy (TLE) and extratemporal lobe epilepsy (exTLE). Our result achievied a 5-fold accuracy of 0.93 and an F1 score of 0.88 on the video level (N = 57). Our results outperformed other state-of-the-art seizure classification models, demonstrating the efficacy of our approach. This suggests potential applications in clinical practice, where unlabeled data could serve as a valuable aid in improving seizure classification accuracy and patient care.